Solved

Optimizing EDS SEO score

- November 26, 2024

- 3 replies

- 802 views

Hey guys,

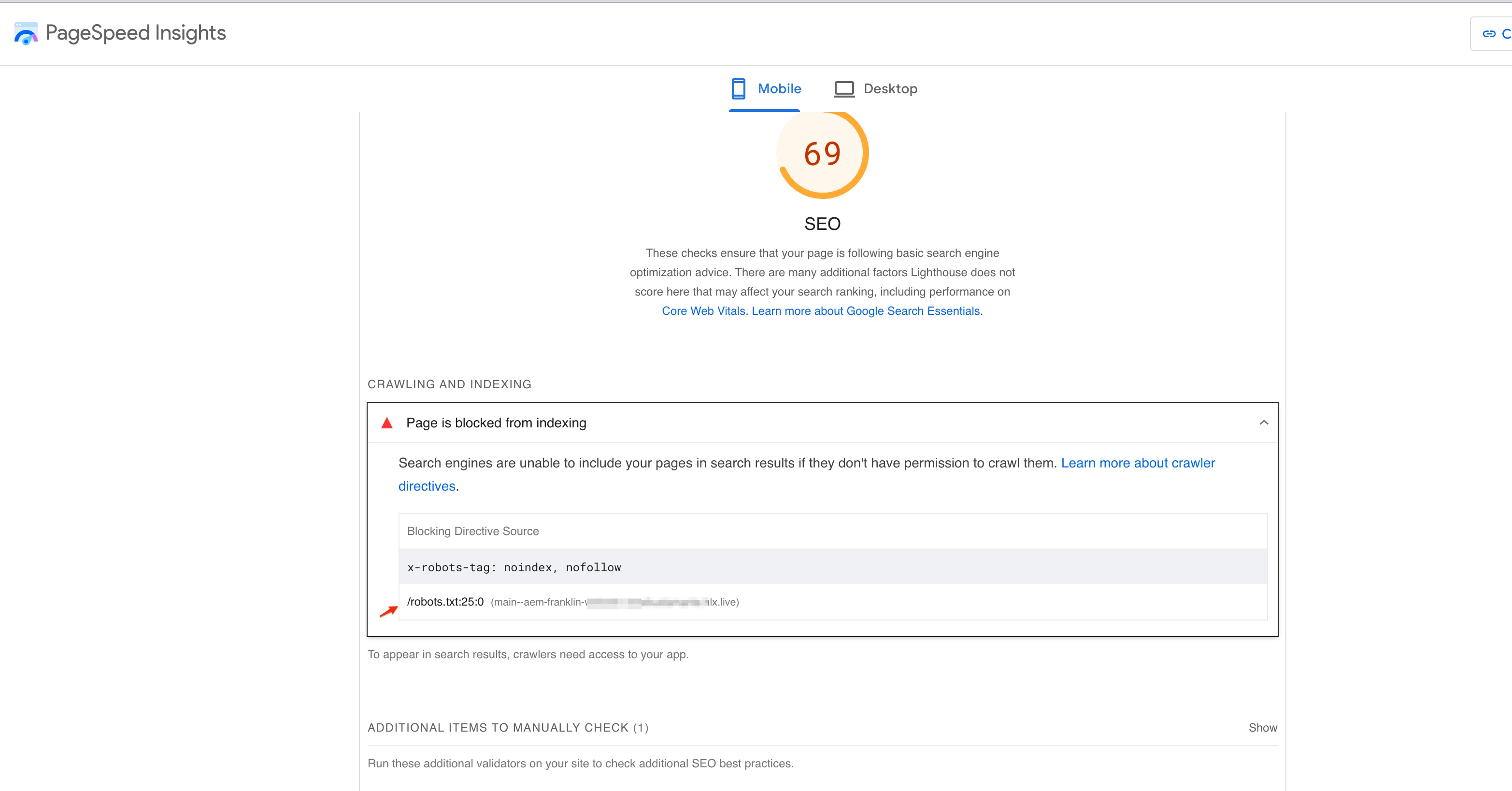

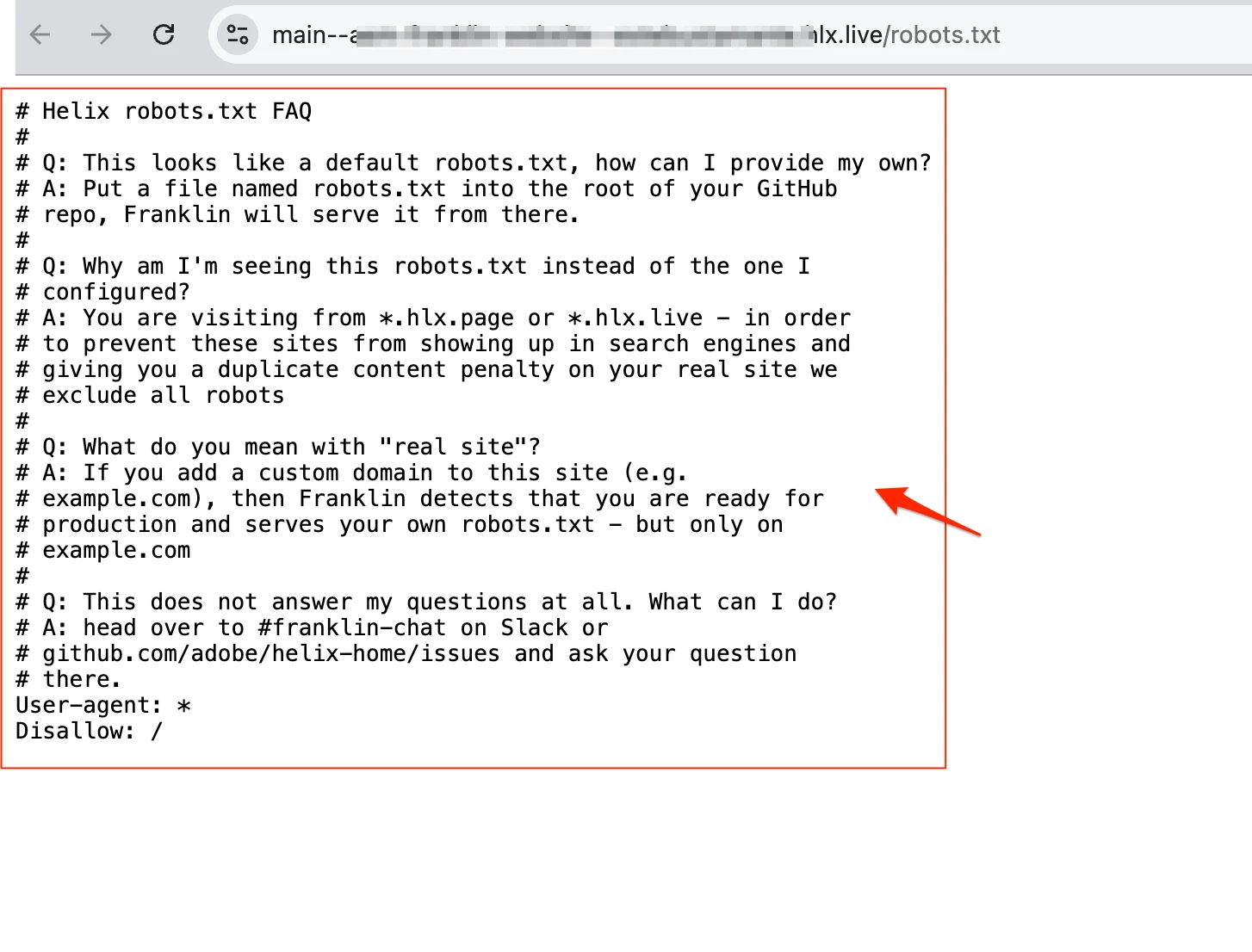

I recently evaluated the Lighthouse SEO scores for my published EDS (Edge Delivery Service) website and noticed that the SEO score was quite low. To better understand the issue, I also checked the boilerplate SEO score, and it appears to be low as well.

I’ve already ensured that metadata is included for each index page, but it seems that web crawlers might be disabled. Has anyone else encountered similar challenges with SEO for EDS sites? If so, I’d appreciate any insights or suggestions on how to address this and improve the SEO performance.

Thanks in advance for your help!

Thanks